latest

Google supercharges Maps voice commands with Assistant speech recognition

Say goodbye to Android Speech Services

Google's Speech Services has been around since 2013, and it’s been an important part of the Android Operating System’s history. Many apps have utilized it to help with text-to-speech and speech-to-text, but the service has been used less and less by Google as it has begun to show its age. Google Maps, which used Speech Services for years, has now replaced it with Google Assistant to provide a smoother user experience.

Google's new Tensor chip makes voice typing on the Pixel 6 faster than any other phone

Hopefully, the company can keep these promises

At today's Pixel 6 and 6 Pro announcement, Google detailed that the Tensor chip is enabling quite some incredible camera voodoo. But Tensor is also improving other machine-learning related features, like speech recognition. The chip is supposed to drastically improve the natural language processing for speech-to-text.

Microsoft just bought the company that built Siri's voice recognition for Apple

Microsoft purchased Nuance, makers of Dragon speech-to-text tools, for $16 billion

Read update

Cortana, Microsoft's digital voice assistant named after that hologram lady from HALO, didn't exactly set the world on fire. Most Windows users I've seen treat it like an annoyance to be avoided, instead of an integral part of the system, as Google's Assistant and Apple's Siri have become. But based on its latest corporate purchase, Microsoft might be taking another stab at voice-powered interaction.

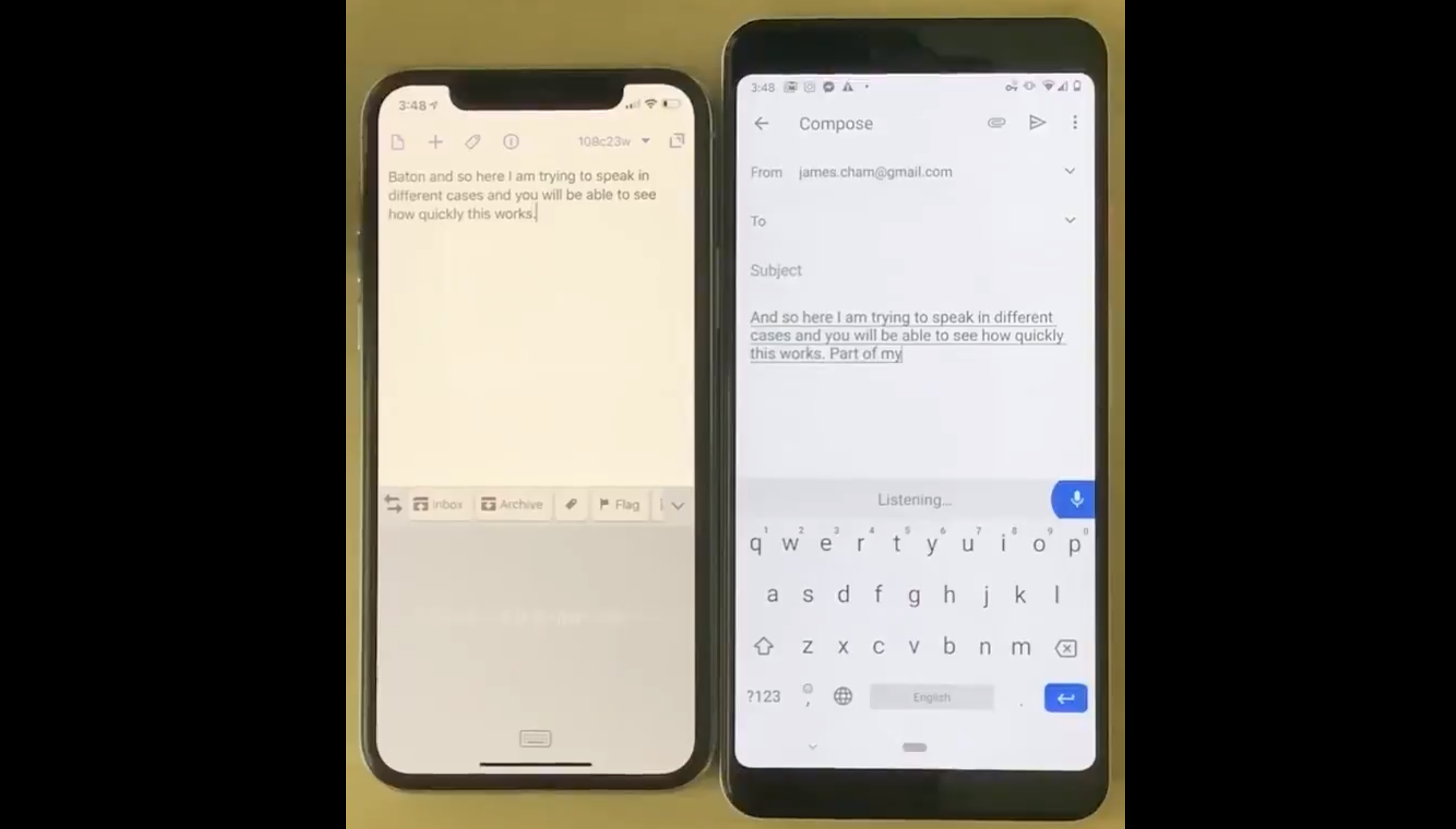

This viral video shows how the Pixel's live voice transcription absolutely destroys the iPhone's (and why it matters)

"Speed is a feature"

We all know Google's speech transcription technology is really, really, really good. Not only is it the best in the industry, it's doing it without a data connection: Pixels have been transcribing audio on-device for some time now, and that's been owed to Google's extremely impressive transcription algorithms that utilize machine learning hardware on its smartphones. But accuracy isn't everything when it comes to transcription, even if it the single most important feature—speed matters too.

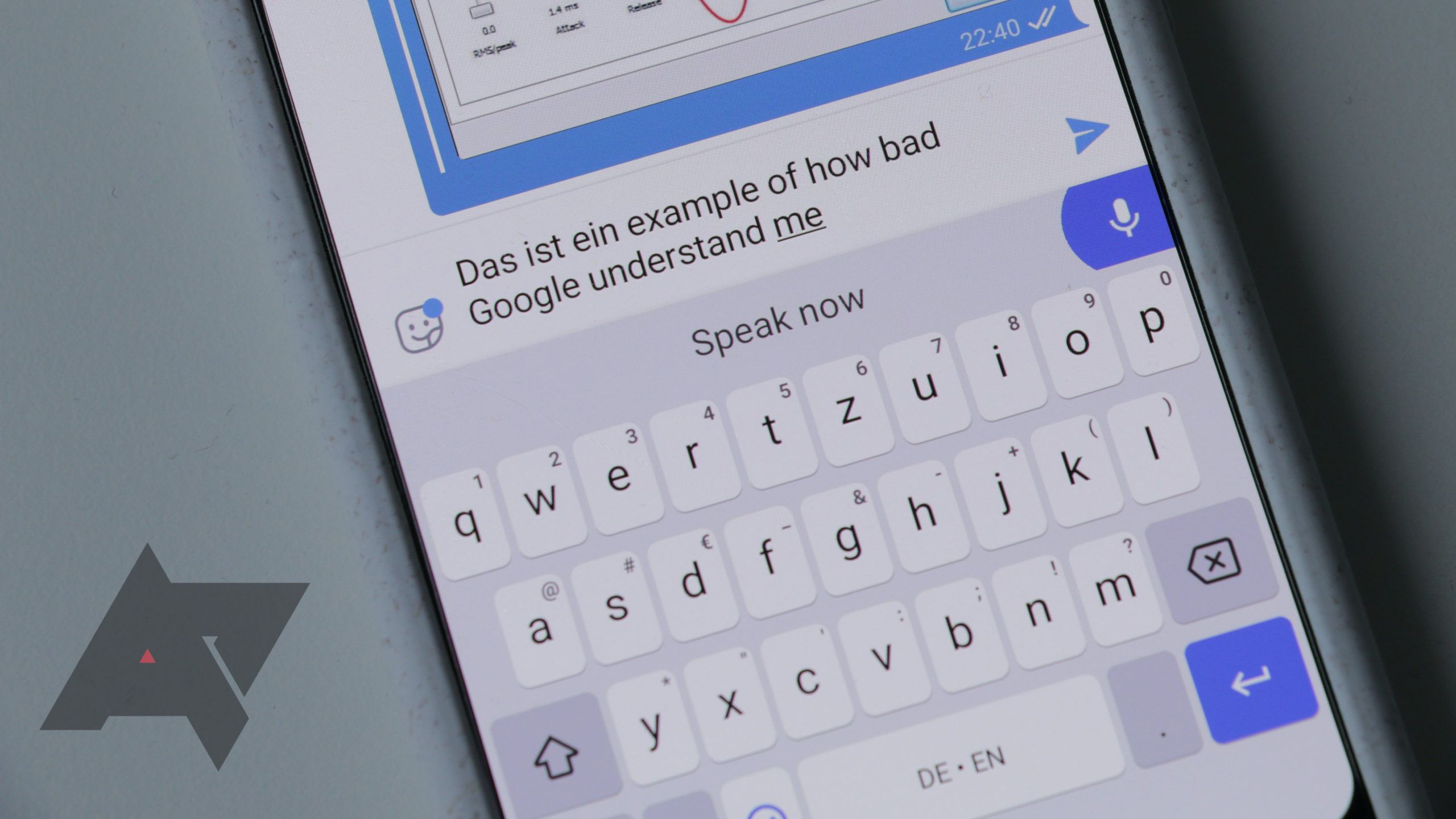

Google collects vast amounts of speech data across all of its products, and while it hasn't been too transparent about the practice, we as users profit from it for the most part. Speech recognition has consistently gotten better over the years, which has allowed impressive sci-fi tech like smart speakers to enter our homes. There's one department where Google needs to step up its game, though: multilingual speakers are having a hard time using more than one language on any Google product.

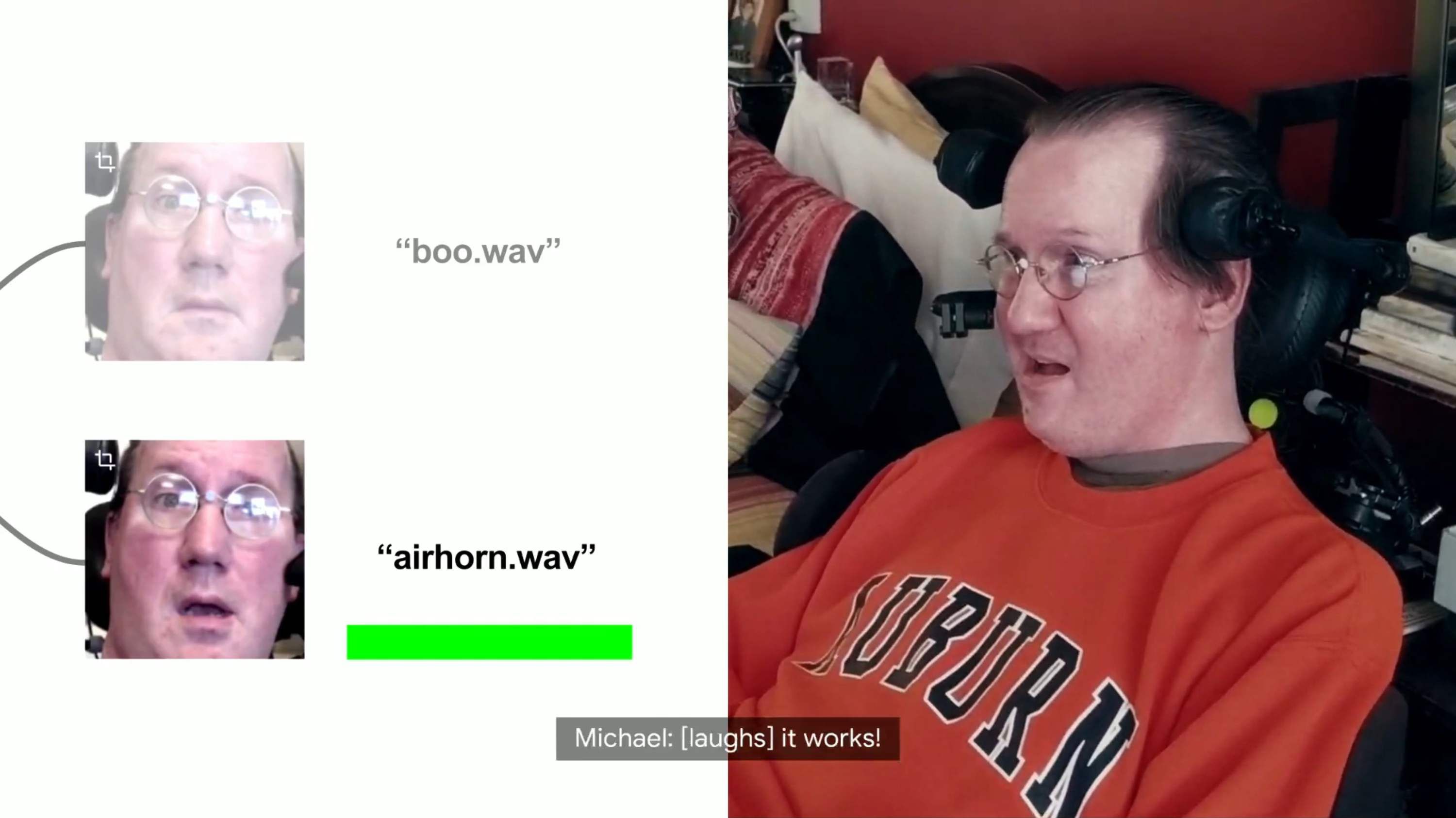

At I/O 2019, Google is going public with a bold AI-driven accessibility initiative it calls Project Euphonia. The goal is to transcribe the words spoken by people who have non-standard speech patterns — those who live with ALS, hearing impairment and other conditions — across messaging platforms to enable better communication between those people, their friends and family, and others.

Speech to text is something we take for granted a lot of the time. It's a complicated process, though — so much so that the heavy lifting is actually done remotely, and the end result is sent back to our devices. But Google has worked out a way to shrink the process to the point that it can be performed locally, and the fruits of that labor are coming to Gboard.

Speech recognition is one of the most powerful aspects of many Google products, particularly in the Google app where Voice Search relies on being able to understand what we're saying. The same is true of Gboard, which is capable of typing up entire messages based on what you dictate to it. We may take it for granted somewhat these days, but it truly is a marvel. Now, this feature can be enjoyed by many more around the globe as Google has added support for 30 further languages.

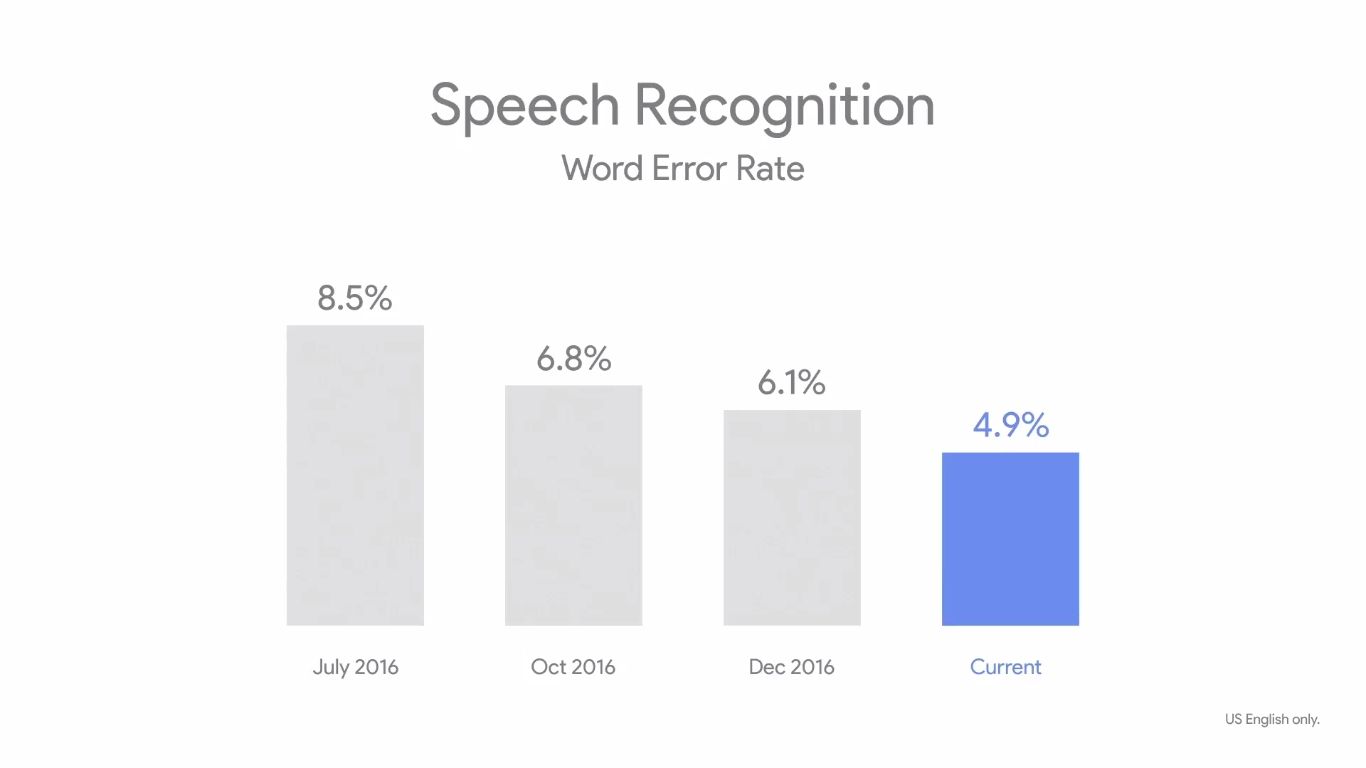

It's no secret that one of Google's strengths in recent years has been voice recognition. In my own experience, my Google Home picks up what I am trying to say almost every time, even in a low voice. Obviously the success rate varies by language and accent, but it is still pretty darn impressive.

As the years go by, I get lazier it seems. So the prospect of talking to my computing devices gets better and better as time passes (even if I have to yell across my house to my Google Home, which is amusing in its own right). However, always-on speech recognition comes at a price for battery-operated devices. Researchers at MIT claim to have to come up with a solution to this: a dedicated speech recognition chip that can reduce power consumption by 90-99% across real-world devices.

Google's voice search function is pretty impressive, especially if you compare it to some of the alternatives that we used to have. But it's by no means perfect - proper nouns and regional accents can sometimes throw it for a loop, necessitating manual text input. But if you'd rather give it another go while restricting your voice input to specific words, that's now an option. One of our readers found this particular tool - we're not sure how long it's been active in Google Search, but it's quite handy.

We know, we know - you're tired of hearing about Siri and its respective knockoffs. But, we assure you, this one is different. Very different. In fact, it's beyond anything we've ever seen before.