Google produces some of the best still photography with its Pixel phones, but its method is more than just magic. Instead, it's the result of hard technical labor and lots of machine learning models. When Google introduced a refined portrait mode on the Pixel 3, it utilized a wacky 5-phone case to train its ML models — but the rig the company created to enable its new Portrait Light mode might be even wilder.

Traditionally, professional portrait photographers use special equipment like off-camera flashes and reflectors to direct lighting into the best position possible based on the subject. Of course, including all that equipment in the box of every Pixel phone would be cost prohibitive, so instead, Google did what it does best and got to work on solving the problem with data.

Looks fun, right?

The company constructed a custom lighting rig that includes 64 different cameras with their own viewpoints as well as 331 LED light sources that are individually programmable. Then seventy people agreed to sit center-stage at this laser light show in order to pose for a bunch of photographs to show how the face of the same subject in the same position is affected based on changing directional lighting.

Some of the individual photos that came out of the lighting rig.

With enough training on these images, Google's algorithms can alter the lighting to appear like it originated from a myriad of different directions based on how the subject of the photo positions their head. In studio portrait photography, the main off-camera light source is positioned a little above the eye line and off-center, so that's what Portrait Light on Pixel phones does, too. (Although you can alter the automatic selection after the fact in the Google Photos editor.)

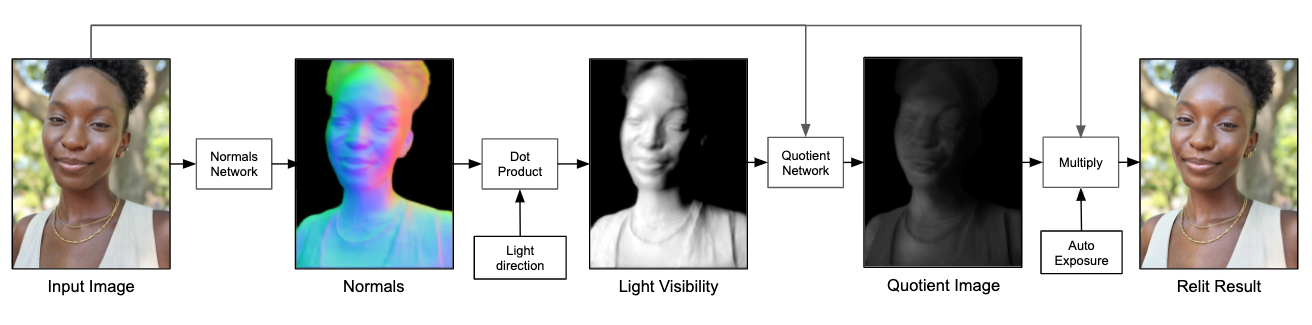

More machine learning models factored into Google's imaging pipeline as well, because once you pick where the portrait light is going to come from, you have to edit the image based on how that lighting hits the subject's unique facial features. A "light visibility map" was used to train the models using insights based on actual physics.

Google optimized the Portrait Light pipeline for mobile devices with a size of less than 10MB.

Owners of the Pixel 4 and up get Portrait Lighting applied automatically whenever pictures are captured in the default mode or in Night Sight images where faces are detected — it even works in groups. If you're still rocking the Pixel 2 or Pixel 3, you can still try out Portrait Light on existing portrait photos in the Google Photos app.

Google isn't the first smartphone manufacturer to introduce portrait lighting effects to its customers, but it may have gone about it in the most Google-y way possible, utilizing a plethora of advanced algorithms and machine learning models. Marc Levoy may not work on the Pixel camera anymore, but he liked to demystify the process by saying it wasn't "mad science," but just "simple physics." Or in this case: just simple physics and a massively complicated lighting rig.

Source: Google