Read update

- We've recently been hearing reports of significantly expanded availability, but have been waiting for Google to make some formal announcement regarding the spread of these new Lens features. Sure enough, the company took to Twitter earlier today to share the news:

Earlier this month, Google announced new "filters" would make their way to Google Lens, such as the ability to point your phone at a restaurant menu and get recommendations on the most popular dishes. These new features are starting to roll out to some users, together with a design revamp.

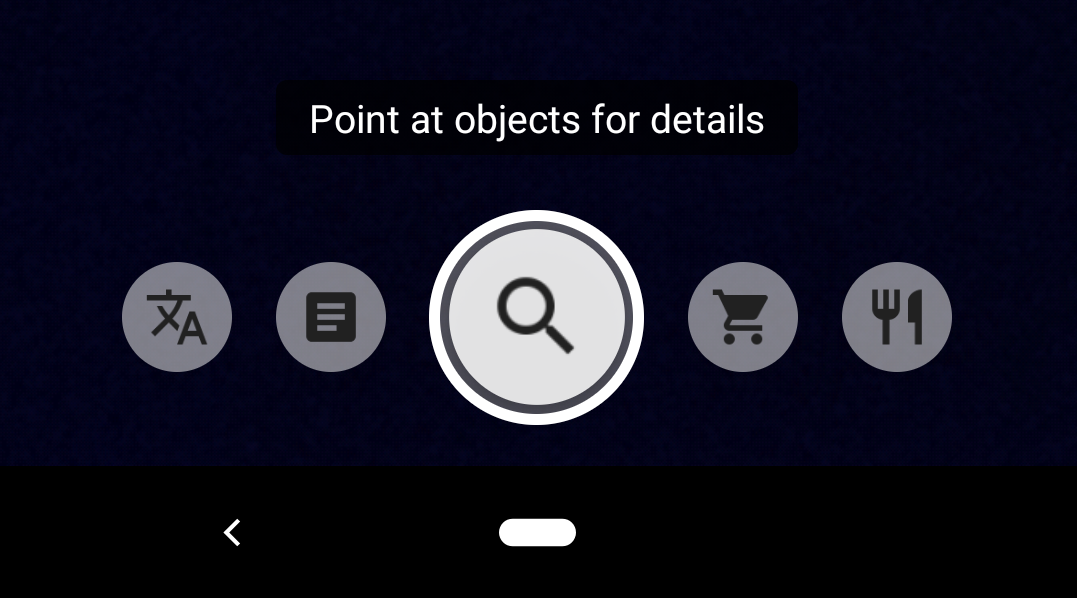

The new user interface can be accessed via Google Assistant and Google Photos for the lucky ones who have received it. It comes with five modes you can scroll through to help refine detection and provide more specific results. These are: Auto, Translate, Text, Shopping, and Dining. The first one is similar to the previous version, where the application would automatically analyze what it’s fed to detect objects. The next two let you point your camera at text to either translate or copy it. Finally, the last two come in handy at the mall, as they let you scan barcodes or automatically recognize items like clothing or furniture to look them up online. Similarly, the Dining mode will provide menu recommendations and help you split the check when you’re at a restaurant.

As shown above, the various modes appear in a carousel at the bottom of the screen, and a shutter button freezes the image to start automatic recognition. There’s also an icon in the top right corner that lets you pick an existing image from your handset's gallery to analyze it.

Finally, there's a new cropping feature that lets you specify only a portion of the screen or image for Lens to analyze, and thus get better targeted results.

The Lens revamp has shown up for some people using Google 9.91 beta, but it seems it’s exclusive to Pixel devices and we've seen it on both Pixels and Samsung devices, but it's rolling out through a server-side switch — the opposite would have been surprising from Google. We’ll keep you posted once it’s more generally available.

UPDATE: 2019/05/29 10:45am PDT BY STEPHEN SCHENCK

We've recently been hearing reports of significantly expanded availability, but have been waiting for Google to make some formal announcement regarding the spread of these new Lens features. Sure enough, the company took to Twitter earlier today to share the news:

At least, it should be going live. We're still not seeing it on all devices, but based on Google's announcement, that's probably a situation that's going to be changing in the immediate future.

Source: 9to5Google

Thanks: Jman100, Nicola