At I/O 2019, Google is going public with a bold AI-driven accessibility initiative it calls Project Euphonia. The goal is to transcribe the words spoken by people who have non-standard speech patterns — those who live with ALS, hearing impairment and other conditions — across messaging platforms to enable better communication between those people, their friends and family, and others.

Google engineers worked with the ALS Therapy Development Institute and ALS Residence Initiative to record voice samples from ALS patients. The audio is converted into visual representations of the samples called spectrograms that are then fed into Google's machine-learning servers.

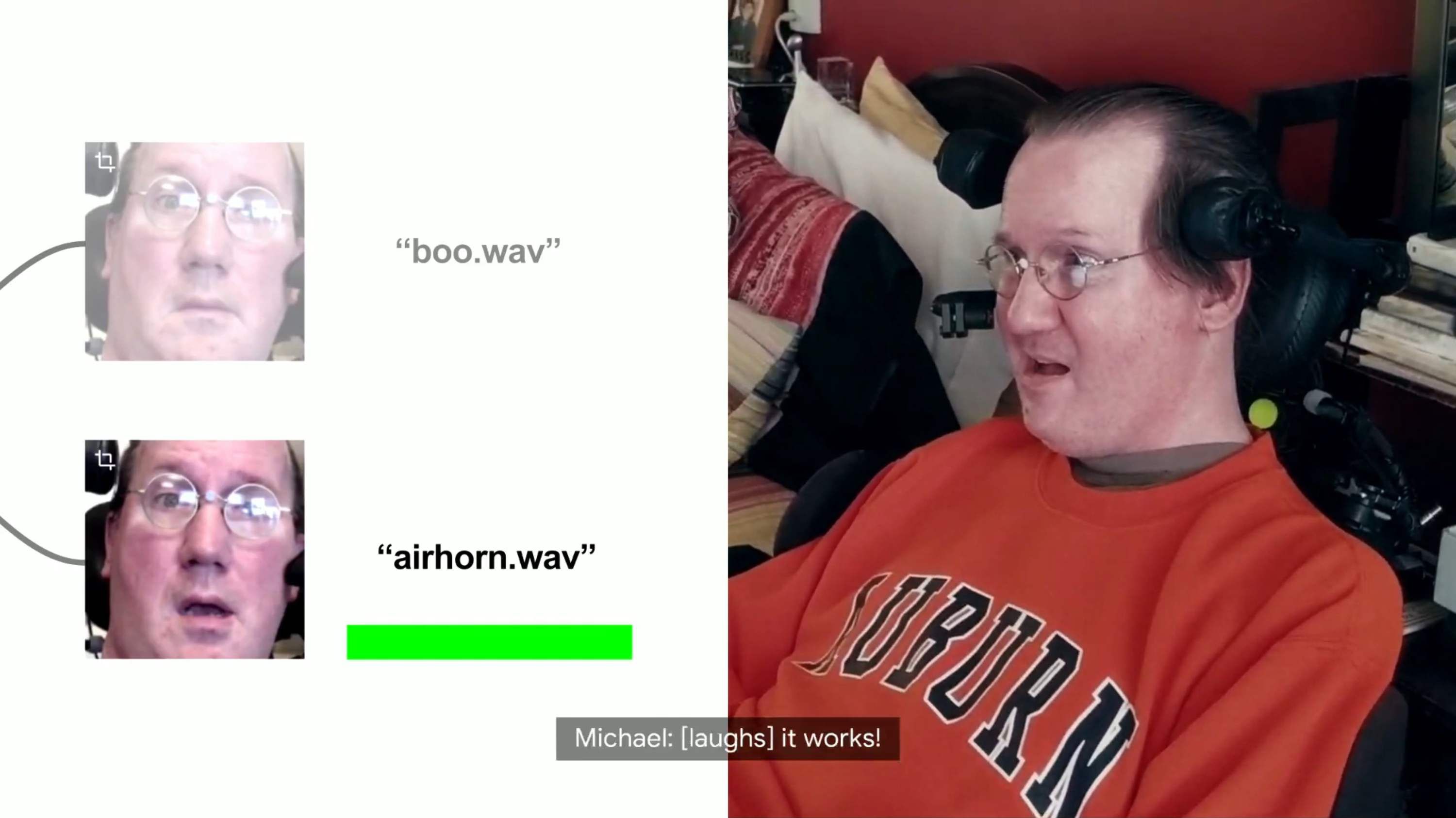

The company hopes it can train its algorithms to read certain aural cues and gestures and then determine what messages to say or send and what apps or services to open in response. It should also aid in developing modified transcription modes for apps such as Google's Live Transcribe.

But the project also needs more voice samples to train those algorithms. Anyone who can volunteer to have their recitations recorded can sign up with this form.

Source: Google