Get ready for the little person living inside your phone and speaker to sound a lot more life-like. Google believes it has reached a new milestone in the quest to make computer-generated speech indistinguishable from human speech with Tacotron 2, a system that trains neural networks to generate eerily natural-sounding speech from text, and they have the samples to prove it.

In a research paper published earlier this month, though yet to be peer-reviewed, Google asserts that previous approaches to text-to-speech (TTS) systems have thus far failed to achieve a genuinely natural sound. Techniques such as concatenative synthesis, in which pre-recorded samples of speech are stitched together, and statistical parametric speech synthesis, Google says have been insufficient, explaining, "The audio produced by these systems often sounds muffled and unnatural compared to human speech."

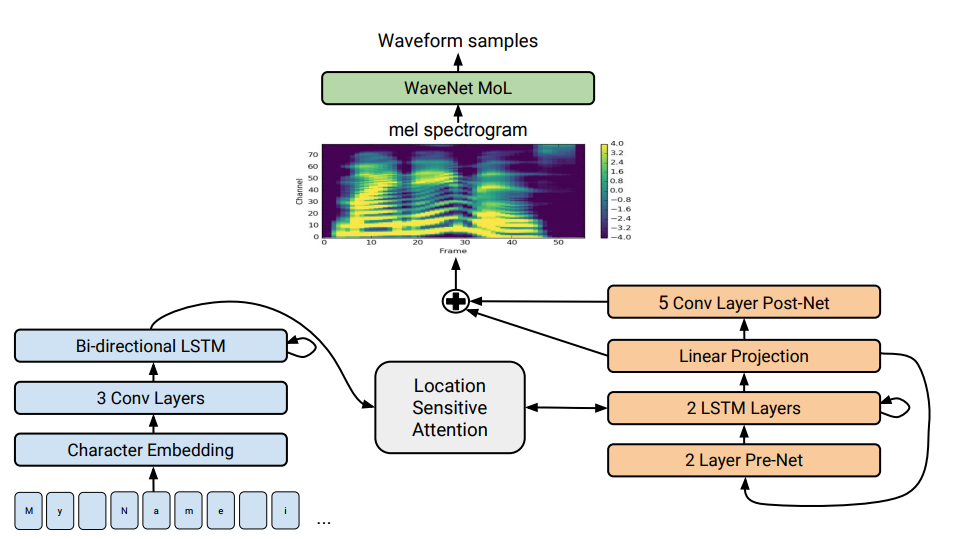

With Tacotron 2 (which is not the same as the world-ending super-weapon used by Lord Business), the company says it has incorporated ideas from its previous TTS systems, WaveNet and the first Tacotron, to reach a new level of fidelity. The full description of the new system is highly technical and nigh impossible for a layperson to parse. In a post at the Google Research Blog, software engineers Jonathan Shen and Ruoming Pang explain:

In a nutshell, it works like this: We use a sequence-to-sequence model optimized for TTS to map a sequence of letters to a sequence of features that encode the audio. These features, an 80-dimensional audio spectrogram with frames computed every 12.5 milliseconds, capture not only pronunciation of words, but also various subtleties of human speech, including volume, speed and intonation. Finally these features are converted to a 24 kHz waveform using a WaveNet-like architecture.

That's one hell of a nutshell.

The result of all this work is a digital voice that can handle some of the most subtle nuances of human speech, and when you listen to the small collection of samples they provide, some of the advancement becomes immediately clear. Not only is Tacotron 2 able to handle increasingly complex words and correctly interpret the intent of text with errors, it has also noticeably improved on the ability to grasp the nuances of things like punctuation, intonation, and pronunciation based on the semantic context of a sentence.

Listen here for how Tacotron 2 correctly pronounces and intones the words "read," "desert," and "present" based on their intended meanings.

“He has read the whole thing.”

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_read_past.wav"][/audio]

“He reads books.”

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_reads.wav"][/audio]

“Don't desert me here in the desert!”

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_desertdesert.wav"][/audio]

“He thought it was time to present the present.”

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_presentpresent.wav"][/audio]

There are several more samples available here for your uncanny listening pleasure, but Google really shows how confident it is with its TTS capabilities by pitting Tacotron 2 samples against recordings of a real human reading the same text. Here are a couple of them:

“She earned a doctorate in sociology at Columbia University.”

Is this the real human?

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_columbia_gen.wav"][/audio]

Or is this?

[audio wav="https://www.androidpolice.com/wp-content/uploads/2017/12/nexus2cee_columbia_gt.wav"][/audio]

It is quite impressive to my ears, though I think it's possible to distinguish which recording is the human recording by listening for the effects of the room in which the real woman is reading, but I could be wrong. It's also important to note that it's quite a simple matter for the human reader to play up the "computer-y" inflections in her reading in order to lower the bar somewhat for the digital voice.

There is one important hitch to all of this. You'll notice that the Tacotron 2 voice is the same voice used by Google for its Assistant and Now products for years, and that's by necessity. To bring a new voice up to this level, the system would have to be trained again from the beginning.

I've personally found little to complain about when it comes to my various digital assistants' voices. Alexa has never sounded "natural," per se, but the quirkiness of the voice is almost now part of its character. Rather than fool us into thinking that a real person is talking, we more or less accept that "this is just what Alexa sounds like." (Also, I've found Siri to be much more charming when I change the voice to that of an Australian male.)

So while the computer-to-human comparisons are very cool, I think it's the intonation and contextual pronunciation examples that are truly impressive.

And just think, when the robot apocalypse comes and AI takes over the planet (and the universe), we'll all be comforted by the soft, nurturing assurances of an indistinguishably human-sounding voice, just before the lights go out.

Source: Google

Via: Quartz